Brands That Have Chosen Churchill Systems

Seasonality

Promos

Pricing

Test Image

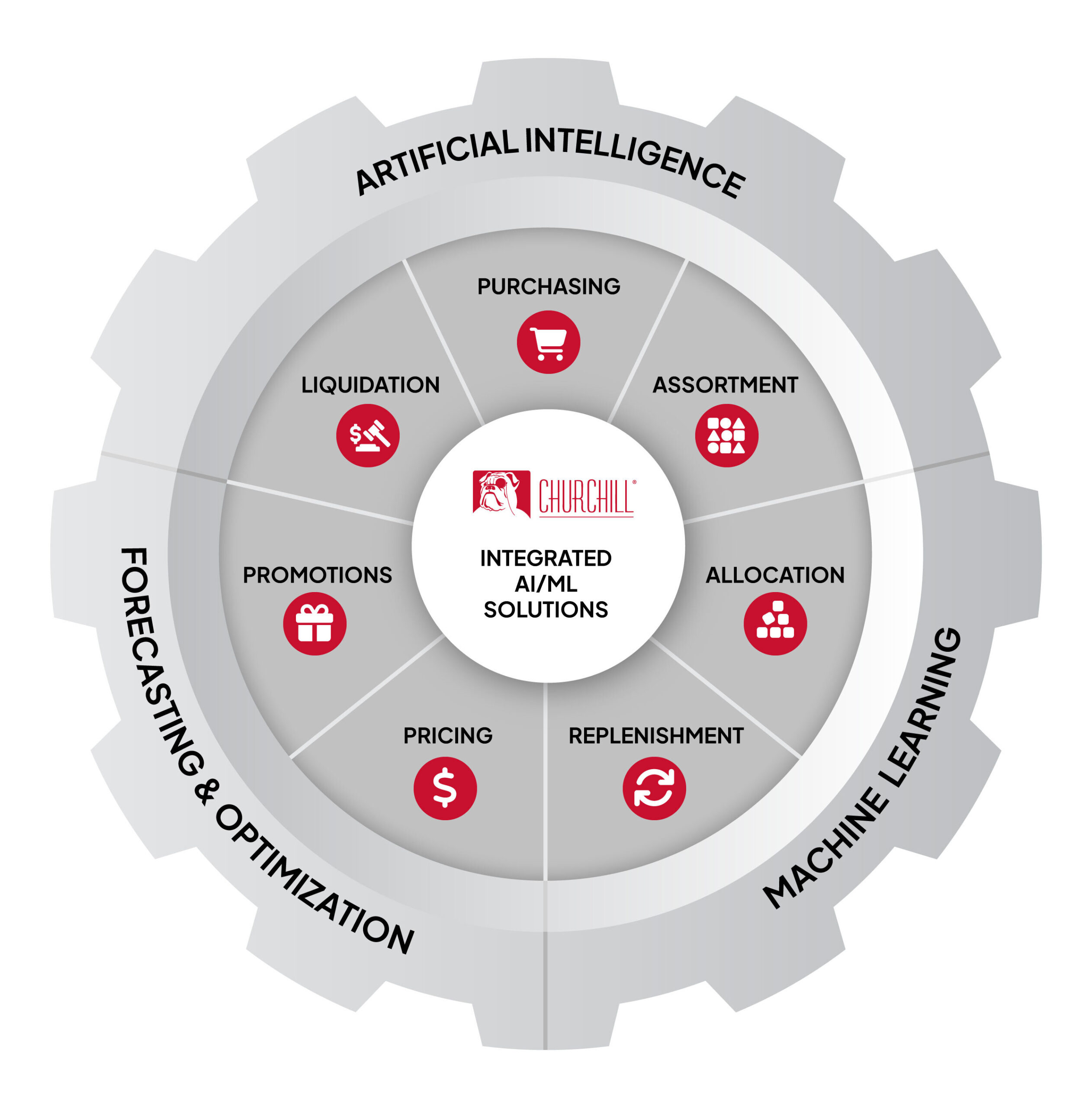

AI Software For Every Retail Challenge

For 35 years, Churchill Systems Inc. has been providing AI-based machine learning software for every aspect of the retail life cycle. From Merchandise Planning to Supply Chain, to Pricing and Promotions, Churchill has the software to propel your existing systems to the next level.

Click on the Retail Wheel to learn more about Churchill’s unique software solutions.

Purchasing

Legacy systems often determine volume using last year’s sales figures. With Churchill AI, get recommendations for Total Buy quantities based on upcoming customer demand.

Assortment

Often times finding the right mix of products, styles and sizes is done through trial and error. Churchill AI analyzes demand history to optimize Assortments by location throughout the year.

Allocation

Proper distribution of product across locations is key to high customer service levels and a profitable category. Demand-based Allocation reduces out of stocks and ensures that each location maximizes potential sales at the sku level.

Replenishment

A reliable demand forecast is the foundation of a sound replenishment system. Churchill combines detailed seasonal profiles with dynamic algorithms to produce a high volume, basic item forecasting application for everyday items.

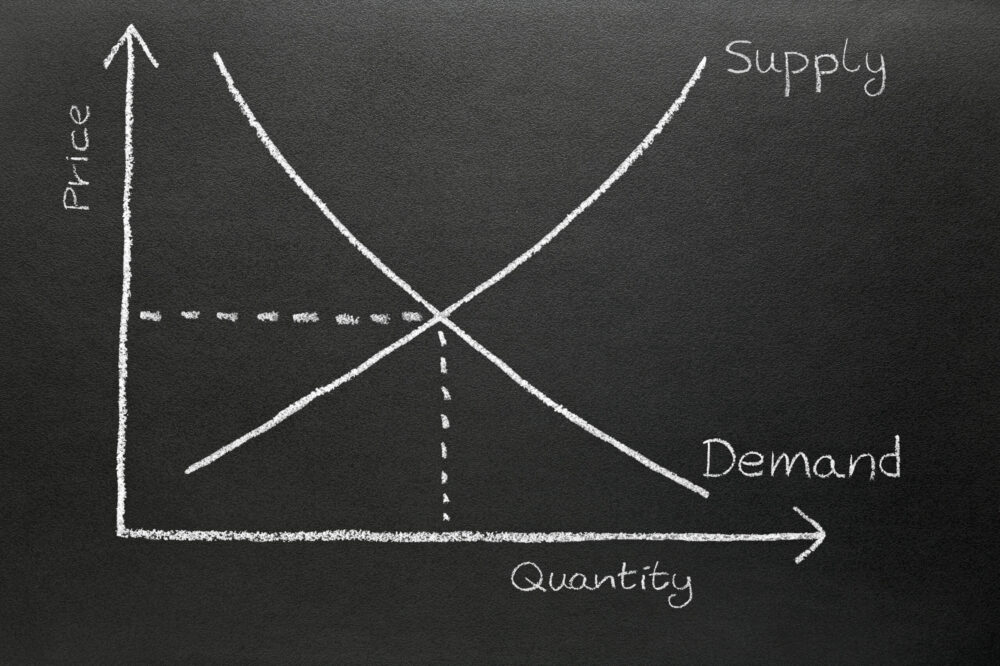

Pricing

Each segment of a product’s lifecycle has its own unique pricing challenges. From new item introduction, to regular pricing, markdown optimization and clearance, Churchill’s Machine Learning software optimizes pricing every step of the way.

Promotions

Today’s promotion are complex activities that involve dozens of unique scenarios. Churchill AI-based Neural Network technology considers 30+ factors to generate sku-level forecasts.

Liquidation

From Markdowns to Clearance, each item has its own objectives and goals to reach. Churchill AI optimizes each forecast and price recommendation at the sku level to meet end-of-season goals at a granular level.

Merchandise Planning

Refine your merchandising activities from start to finish using integrated AI technologies every step of the way. From initial purchase quantities and store level assortments, to size ranges and assortment cannibalization, Churchill’s software solutions provide the intelligence required for every step in the product life cycle.

- Purchasing

- Assortment

- Lifecycle Forecasting

- New Items/Special Buys

Supply Chain

Today’s retail supply chain is a vast and complex network of warehouses, distribution centers, multi-format storefronts and e-commerce platforms. Churchill’s advanced machine learning software provides deep insights for allocation, replenishment, and more. Ship product based on future demand to minimize localized out of stock situations and maximize customer service levels.

- Seasonality

- Allocation

- Sell-Thru Forecasting

- Inventory Replenishment

Pricing & Promotions

Today’s retail environment is increasingly competitive. Churchill has the tools to provide pricing recommendations for new products, regular demand, promotions and markdowns, based on preset objectives. Get the most out of promotions by understanding the impact of combined marketing and pricing actions upon future demand. Churchill’s AI software maximizes revenue and gross margins by predicting demand at the item/ store level of detail.

- Regular Pricing

- Promotional Demand

- Cannibalization/Halo

- Markdown Price Optimization

- Clearance & Liquidation

Enhance Your Retail Planning With AI-based Demand Intelligence

A major breakthrough occurred in 2001 when Churchill engineers, utilizing AI neural network technology, discovered how to significantly improve the efficiency of AI-based retail forecasting applications, while at the same time, drastically reducing the amount of historical data required to build the forecasting and analytic models.

“Churchill Systems gave Yellow a roadmap to a comprehensive and integrated forecasting solution.”

Dr. Douglas Avrith

President & CEO, Yellow Shoes

Increase in sales

Customer Engagement